In an effort to understand what happens in the brain when a person reads or considers such abstract ideas as love or justice, Princeton researchers have for the first time matched images of brain activity with categories of words related to the concepts a person is thinking about. The results could lead to a better understanding of how people consider meaning and context when reading or thinking.

The researchers report in the journal Frontiers in Human Neuroscience that they used functional magnetic resonance imaging (fMRI) to identify areas of the brain activated when study participants thought about physical objects such as a carrot, a horse or a house. The researchers then generated a list of topics related to those objects and used the fMRI images to determine the brain activity that words within each topic shared. For instance, thoughts about "eye" and "foot" produced similar neural stirrings as other words related to body parts.

Once the researchers knew the brain activity a topic sparked, they were able to use fMRI images alone to predict the subjects and words a person likely thought about during the scan. This capability to put people's brain activity into words provides an initial step toward further exploring themes the human brain touches upon during complex thought.

"The basic idea is that whatever subject matter is on someone's mind -- not just topics or concepts, but also emotions, plans or socially oriented thoughts -- is ultimately reflected in the pattern of activity across all areas of his or her brain," said the team's senior researcher, Matthew Botvinick, an associate professor in Princeton's Department of Psychology and in the Princeton Neuroscience Institute.

"The long-term goal is to translate that brain-activity pattern into the words that likely describe the original mental 'subject matter,'" Botvinick said. "One can imagine doing this with any mental content that can be verbalized, not only about objects, but also about people, actions and abstract concepts and relationships. This study is a first step toward that more general goal.

"If we give way to unbridled speculation, one can imagine years from now being able to 'translate' brain activity into written output for people who are unable to communicate otherwise, which is an exciting thing to consider. In the short term, our technique could be used to learn more about the way that concepts are represented at the neural level -- how ideas relate to one another and how they are engaged or activated."

The research, which was published Aug. 23, was funded by a grant from the National Institute of Neurological Disease and Stroke, part of the National Institutes of Health.

Depicting a person's thoughts through text is a "promising and innovative method" that the Princeton project introduces to the larger goal of correlating brain activity with mental content, said Marcel Just, a professor of psychology at Carnegie Mellon University. The Princeton researchers worked from brain scans Just had previously collected in his lab, but he had no active role in the project.

"The general goal for the future is to understand the neural coding of any thought and any combination of concepts," Just said. "The significance of this work is that it points to a method for interpreting brain activation patterns that correspond to complex thoughts."

Tracking the brain's 'semantic threads'

Largely designed and conducted in Botvinick's lab by lead author and Princeton postdoctoral researcher Francisco Pereira, the study takes a currently popular approach to neuroscience research in a new direction, Botvinick said. He, Pereira and co-author Greg Detre, who earned his Ph.D. from Princeton in 2010, based their work on various research endeavors during the past decade that used brain-activity patterns captured by fMRI to reconstruct pictures that participants viewed during the scan.

"This 'generative' approach -- actually synthesizing something, an artifact, from the brain-imaging data -- is what inspired us in our study, but we generated words rather than pictures," Botvinick said.

"The thought is that there are many things that can be expressed with language that are more difficult to capture in a picture. Our study dealt with concrete objects, things that are easy to put into a picture, but even then there was an interesting difference between generating a picture of a chair and generating a list of words that a person associates with 'chair.'"

Those word associations, lead author Pereira explained, can be thought of as "semantic threads" that can lead people to think of objects and concepts far from the original subject matter yet strangely related.

"Someone will start thinking of a chair and their mind wanders to the chair of a corporation then to Chairman Mao -- you'd be surprised," Pereira said. "The brain tends to drift, with multiple processes taking place at the same time. If a person thinks about a table, then a lot of related words will come to mind, too. And we thought that if we want to understand what is in a person's mind when they think about anything concrete, we can follow those words."

Pereira and his co-authors worked from fMRI images of brain activity that a team led by Just and fellow Carnegie Mellon researcher Tom Mitchell, a professor of computer science, published in the journal Science in 2008. For those scans, nine people were presented with the word and picture of five concrete objects from 12 categories. The drawing and word for the 60 total objects were displayed in random order until each had been shown six times. Each time an image and word appeared, participants were asked to visualize the object and its properties for three seconds as the fMRI scanner recorded their brain activity.

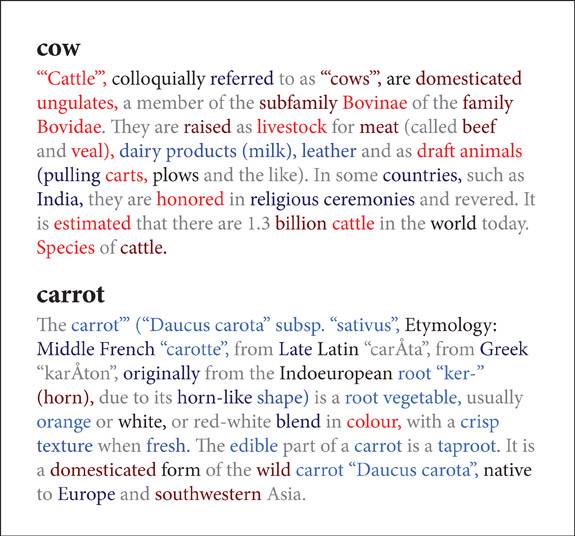

Princeton researchers developed a method to determine the probability of various words being associated with the object a person thought about during a brain scan. They produced color-coded figures that illustrate the probability of words within the Wikipedia article about the object the participant saw during the scan actually being associated with the object. The more red a word is, the more likely a person is to associate it, in this case, with "cow." On the other hand, bright blue suggests a strong correlation with "carrot." Black and grey "neutral" words had no specific association or were not considered at all. (Illustration courtesy of Francisco Pereira)

Matching words and brain activity with related topics

Separately, Pereira and Detre constructed a list of topics with which to categorize the fMRI data. They used a computer program developed by Princeton Associate Professor of Computer Science David Blei to condense 3,500 articles about concrete objects from the online encyclopedia Wikipedia into all the topics the articles covered. The articles included a broad array of subjects, such as an airplane, heroin, birds and manual transmission. The program came up with 40 possible topics -- such as aviation, drugs, animals or machinery -- with which the articles could relate. Each topic was defined by the words most associated with it.

The computer ultimately created a database of topics and associated words that were free from the researchers' biases, Pereira said.

"We let the software discern the factors that make up meaning rather than stipulating it ourselves," he said. "There is always a danger that we could impose our preconceived notions of the meaning words have. Plus, I can identify and describe, for instance, a bird, but I don't think I can list all the characteristics that make a bird a bird. So instead of postulating, we let the computer find semantic threads in an unsupervised manner."

The topic database let the researchers objectively arrange the fMRI images by subject matter, Pereira said. To do so, the team searched the brain scans of related objects for similar activity to determine common brain patterns for an entire subject, Pereira said. The neural response for thinking about "furniture," for example, was determined by the common patterns found in the fMRI images for "table," "chair," "bed," "desk" and "dresser." At the same time, the team established all the words associated with "furniture" by matching each fMRI image with related words from the Wikipedia-based list.

Based on the similar brain activity and related words, Pereira, Botvinick and Detre concluded that the same neural response would appear whenever a person thought of any of the words related to furniture, Pereira said. And a scientist analyzing that brain activity would know that person was thinking of furniture. The same would follow for any topic.

Using images to predict the words on a person's mind

Finally, to ensure their method was accurate, the researchers conducted a blind comparison of each of the 60 fMRI images against each of the others. Without knowing the objects the pair of scans pertained to, Pereira and his colleagues estimated the presence of certain topics on a participant's mind based solely on the fMRI data. Knowing the applicable Wikipedia topics for a given brain image, and the keywords for each topic, they could predict the most likely set of words associated with the brain image.

The researchers found that they could confidently determine from an fMRI image the general topic on a participant's mind, but that deciphering specific objects was trickier, Pereira said. For example, they could compare the fMRI scan for "carrot" against that for "cow" and safely say that at the time the participant had thought about vegetables in the first example instead of animals. In turn, they could say that the person most likely thought of other words related to vegetables, as opposed to words related to animals.

On the other hand, when the scan for "carrot" was compared to that for "celery," Pereira and his colleagues knew the participant had thought of vegetables, but they could not identify related words unique to either object.

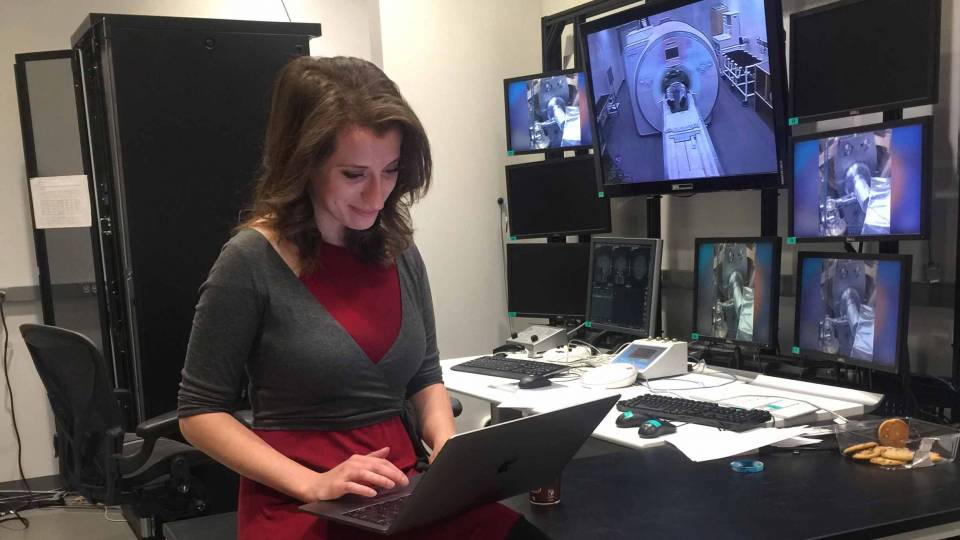

One aim going forward, Pereira said, is to fine-tune the group's method to be more sensitive to such detail. In addition, he and Botvinick have begun performing fMRI scans on people as they read in an effort to observe the various topics the mind accesses.

"Essentially," Pereira said, "we have found a way to generally identify mental content through the text related to it. We can now expand that capability to even further open the door to describing thoughts that are not amenable to being depicted with pictures."