| Web Exclusives: TigersRoar |

Fallacy

of the Assumption of Statistical Independence Between Successive Indeterminant

Events

by Thomas V. Gillman ’49

As

a preface I would like to mention a concept that bears on the relation

between physics as encompassed by science and metaphysics the branch of

philosophy that treats of the ultimate nature of existence, reality, and

experience.

Recent research in cosmology indicates that there exists a universal wave

function that determines everything in the universe. This unique field

gives being to the recognized physical fields-gravitational and electromagnetic

forces, the strong force that among other things is responsible for the

sun shining, and the weak force instrumental in radioactive decay. The

further possibility exists that life is a direct expression of the effects

of such a "force" field and that the evolutionary properties

of living matter provide evidence of the indeterminate nature of the universal

wave function.

There is a simple experiment that I believe demonstrates the existence

and the workings of such a universal wave function. The results, at the

very least, represent an instance of the indeterminate nature of the probability

aspect of wave mechanics at the macro level.

A Heuristic Experiment

The procedure is simply a matter of flipping a coin and recording the

result of each toss, head or tail, as well as the sequence of the results

for an arbitrarily large number of tosses- something in the order of 100

tosses. No attempt is made to maintain a uniform time interval between

tosses, since time apparently does not enter in.

The expectation is that in the long term the number of resulting heads

and tails will be approximately equal, since the likelihood of a head

or tail is 1/2. According to probability theory each toss of the coin

is an independent event, therefore, there is not supposed to be any relation

between successive tosses of the coin. The probability of any particular

sequence of heads or tails is therefore the product of their "independent"

probabilities. For example, the probability of tossing seven heads in

succession would be (112)7 or 1/128-a fairly unlikely sequence of events

but well within the range of expectation. On the other hand, it is common

knowledge that in many games of chance players often experience "runs

of luck" in which the outcome temporarily favors them. How are such

unlikely courses of events to be explained?

In conducting this experiment it is common that over the course of 100

or so tosses a sequence of at least six or more heads or tails will occur.

Even longer sequences are interrupted by only one or two inverse events,

thus establishing a trend or a "run" as it is often described.

Graphic Results

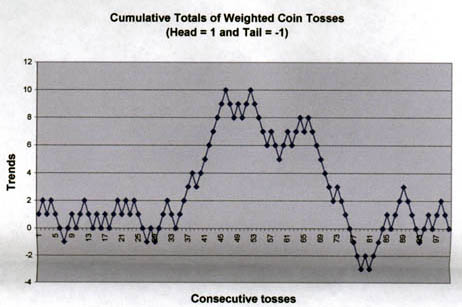

If one plots the sequence of tosses on the horizontal axis and the algebraic

results of the coin tossing on the vertical axis, with the simple assumption

that each toss represents a unit gain or loss of some sort of "potential"

from one toss to the next, some fascinating patterns emerge. These correspond

to the so-called "runs" of good or bad luck that gamblers experience.

A more interesting finding is that these deviations tend to propagate

or persist. That is, the number of heads or tails sometimes does not even

out for long sequences. [Note that these sequences are time independent

and therefore do not represent periods, but they do seem to indicate a

"progression".]

A question that comes to mind is whether or not the cumulative "potential"

indicated on the graphs provides evidence for the existence of a deterministic

element that enters into these results. The gambler will tell you that

when he is on a "roll" he is able to "influence" the

course of events. Who is to say? What is obvious is that if one bets in

concert with one of these "potential" swings-these apparent

"drifts" of the probability function-one is going to be ahead

of the odds for an indeterminate but substantial number of events!

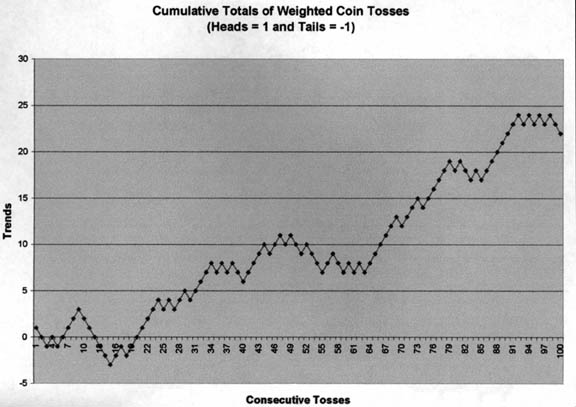

Whereas in the previous plot, the number of opposing events (heads and

tails) is not far from the expected proportion, viz., 50:50, this is not

always the case, as shown in the following graph.

Hypothesis

While there is little basis for conclusion at this stage, the results

do lead to speculation. The partially determinate nature of the outcomes

of events that have traditionally been treated as indeterminate, random,

and independent may point to the possible fluctuations of something of

the nature of a general field which "determines" the course

of events. Events at the macro level are usually analyzed in terms of

cause and effect, but there are many events where the outcomes cannot

be predicted but can only be described statistically.

Another instance is radioactive decay at the subatomic level. Anyone who

has listened to a Geiger counter knows that these events occur randomly

but in time-wise bursts that exhibit no regularity. And there is no way

of predicting the decay of a particular radioactive atom in terms of time

or location. The best we can do is to establish a so-called half-life

— a period during which half of the atoms originally present will

have decayed. We have no way of knowing what conditions, if any, "cause"

the occurrence of the decay event.

The most that we know, at present, is something about the elementary particles

of matter that are involved in the process. It has been discovered that,

in the vernacular of elementary particle physics, the '"weak"

interaction causes radioactive decay of nuclear constituents and unstable

leptons, and is mediated by the massive W and Z bosons. Whatever precipitates

(causes) these weak interactions within a space-time frame is unknown,

at least as far as I am aware.

Further speculation

Now wouldn't it be interesting if it were found that what we call probability

is nothing more than the way in which the occurrence of events is modulated

by something in the nature of a general wave? Further, wouldn't it be

a kick if the effects of a general field are reflected in the activities

of living matter, the primary characteristic of which is purposiveness

or goal-oriented behavior?

Suppose that living matter has the power to causally influence

the outcome of events. This would help to explain the apparent evolutionary

discontinuities that are reflected in the geologic record. This leads

to the further possibility that evolution occurs, not as a result of environmental

change, but reflects the implicit capability of living matter to affect

change as a way of adapting to changing environmental requirements or

opportunities? This is in direct contrast to Darwin's theory of the survival

of the fittest, or the occurrence of natural selection among the chance

variants or "sports" that are speculated to arise spontaneously.

Running parallel to such speculation is the heuristic work of the engineer

and behaviorist William Powers. (William T. Powers Living Control Systems:

Selected Papers (Gravel Switch, KY: The Control Systems Group, Inc., 1989).)

He shows that control in living systems is neither subject to chance nor

to the causal control of outside agencies. Behavior is not a direct response

to external stimuli but is under the direct and nonprobabilistic control

of feedback mechanisms built into the organism. We find that this cybernetic

mechanism is typical of living organisms and, therefore, is a major design

aspect implicit in the life functions.

Returning to a consideration of the results of the coin-toss experiment,

the necessary next experiment would be to look to the identification of

something in the nature of a bifurcation that will predict the onset of

another probability swing or trend in the course of action. That appears

to be the nature of evolutionary change, indeed of all change. If such

change can be controlled, as we attempt to do through planning, then we

have evidence for the intervention of a life force (willpower?) in the

determination of the outcome of events.

The Statistical Postulate of Quantum Mechanics

In a discussion of quantum mechanics, physicist Victor J. Stenger (ViCtor

J. Stenger, The Unconscious Quantum (Amherst, NY: Prometheus Books, 1995),

pp 56-60) indicates:

"In 1926 Max Born proposed what was to become a primary postulate

of quantum mechanics in the von Neumann scheme. According to this postulate,

the wave function is used to compute the probability P for a particle

to be found in a particular state. This probability was to be proportional

to I![]() I2,

the square of the magnitude of the wave function

I2,

the square of the magnitude of the wave function ![]() ....

This postulate was extended by Wolfgang Pauli to include the probability

for finding a particle at a particular position.

....

This postulate was extended by Wolfgang Pauli to include the probability

for finding a particle at a particular position.

"Pauli proposed that the probability P for finding

a particle in an infinitesimal volume element AV located in a specific

region of space is equal to the square of the magnitude of the wave function

![]() computed at

that point multiplied by

computed at

that point multiplied by ![]() V:

P = I

V:

P = I![]() I2

AV. Since we can measure volume in any units we wish, no loss of generality

occurs if we assume a unit volume,

I2

AV. Since we can measure volume in any units we wish, no loss of generality

occurs if we assume a unit volume, ![]() V

= 1, and simply write P = I

V

= 1, and simply write P = I![]() I2

and understand it to mean probability per unit volume, that is, probability

density.

I2

and understand it to mean probability per unit volume, that is, probability

density.

Paraphrasing Pauli's postulate in terms of my conjecture about a universal

wave: The probability of an event, that is, conversion of the energy of

the universal wave into a material state at a particular place (the result

of the toss of a coin is thought of as equivalent to the conversion of

energy into a particle) is equal to the square of the wave function,![]() .

Mathematically, squaring of the quantum "state" converts it

from imaginary and complex to real and rational, and this would be analogous

to the occurrence of a real event. A positive value may correspond to

a constructive (energy-binding) event typical of the action of living

systems, while a negative value would represent a destructive (entropic)

event.

.

Mathematically, squaring of the quantum "state" converts it

from imaginary and complex to real and rational, and this would be analogous

to the occurrence of a real event. A positive value may correspond to

a constructive (energy-binding) event typical of the action of living

systems, while a negative value would represent a destructive (entropic)

event.

Stenger mentions that the role of statistics in quantum mechanics was

supported by Einstein's calculation of the probabilities for atomic transitions;

however, the uncertain nature of its predictions was one of the aspects

that Einstein found unsatisfying about quantum mechanics. Einstein is

well known for having said, 'God does not play dice,' What he was really

objecting to was the notion that statistics was the final word. He found

it hard to accept that no underlying causal laws determined the behavior

of individual quantum particles at the most fundamental level. As Stenger

explains,

"Actually, statistics enters quantum mechanics only in an indirect

way. The time-dependent Schrödinger equation predicts the exact value

of the wave function ![]() at future times given its value at some initial time. Probability enters

with the Bom postulate when the time comes to make a prediction on the

expected value of some measurement."

at future times given its value at some initial time. Probability enters

with the Bom postulate when the time comes to make a prediction on the

expected value of some measurement."

When such a measurement is attempted, however, we are attempting to calculate

at some instant in time when in fact the application of the Schr6dinger

equation varies constantly over time. So we are left with a probability

distribution rather than a specific value at tx.

Thereby, quantum mechanics is often said to be "deterministic"

in that its basic equation, the Schrödinger equation, precisely determines

the time evolution of the wave function. However, it is indeterministic

in the sense that knowledge of the wave function is not always

sufficient to predict the outcome of a measurement — or of an event.

By the probability postulate, the wave function allows for the prediction

of the average motion of a system [the probable outcome of a coin

toss] but not the outcome in any particular instance, which is what the

above experiment demonstrates. I believe that we are approaching the time

when some deterministic theory will evolve that goes beyond quantum mechanics

and which applies to individual quantum systems as well as to causal events

at the macro level.

According to the recent theoretical development of Dr. Frank Tipler, we

have an all-pervasive physical field which gives being to all being —

which gives life to all living things-and which itself is generated by

the ultimate life which it defines. Through this "physical"

field we humans are apparently capable of superimposing our own wills

on the ordinarily indeterminate laws of probability and the chaotic physical

laws that prevail throughout the universe. This we do when we exercise

our intellect and creativity.

This is some of the speculative thinking that can serve as a precursor

to further theoretical development — and it comes from reaction to

a portion of an article by Billy

Goodman '80 in the January 29, 2003, issue of PAW entitled "Thinking

about Thinking." Now, what are the odds against that development?

Go back to our online Letter Box Table of Contents

HOME

![]() SITE

MAP

SITE

MAP

Current

Issue ![]() Online

Archives

Online

Archives ![]() Printed

Issue Archives

Printed

Issue Archives

Advertising Info ![]() Reader

Services

Reader

Services ![]() Search

Search

![]() Contact

PAW

Contact

PAW ![]() Your

Class Secretary

Your

Class Secretary